General Research Types

It is useful to consider the various research methodologies we have described as falling within one or more general research categories: descriptive, associational, or intervention-type studies.

DESCRIPTIVE STUDIES

Descriptive studies describe a given state of affairs as fully and carefully as possible. One of the best examples of descriptive research is found in botany and zoology, where each variety of plant and animal species is meticulously described and information is organized into useful taxonomic categories. In educational research, the most common descriptive methodology is the survey, as when researchers summarize the characteristics (abilities, preferences, behaviors, and so on) of individuals or groups or (sometimes) physical environments (such as schools). Qualitative approaches, such as ethnographic and historical methodologies are also primarily descriptive in nature. Examples of descriptive studies in education include identifying the achievements of various groups of students; describing the behaviors of teachers, administrators, or counselors; describing the attitudes of parents; and describing the physical capabilities of schools. The description of phenomena is the starting point for all research endeavors.

Descriptive research in and of itself, however, is not very satisfying, since most researchers want to have a more complete understanding of people and things. This requires a more detailed analysis of the various aspects of phenomena and their interrelationships. Advances in biology, for example, have come about, in large part, as a result of the categorization of descriptions and the subsequent determination of relationships among these categories.

ASSOCIATIONAL RESEARCH

Educational researchers also want to do more than simply describe situations or events. They want to know how (or if), for example, differences in achievement are related to such things as teacher behavior, student diet, student interests, or parental attitudes. By investigating such possible relationships, researchers are able to understand phenomena more completely. Furthermore, the identification of relationships enables one to make predictions. If researchers know that student interest is related to achievement, for example, they can predict that students who are more interested in a subject will demonstrate higher achievement in that subject than students who are less interested. Research that investigates relationships is often referred to as associational research.

Correlational and causalcomparative methodologies are the principal examples of associational research. Other examples include studying relationships between achievement and attitude, between childhood experiences and adult characteristics, or between teacher characteristics and student achievement—all of which are correlational studies—and between methods of instruction and achievement (comparing students who have been taught by each method) or between gender and attitude (comparing attitudes of males and females)—both of which are causal-comparative studies.

As useful as associational studies are, they too are ultimately unsatisfying because they do not permit researchers to “do something” to infl uence or change outcomes. Simply determining that student interest is predictive of achievement does not tell us how to change or improve either interest or achievement, although it does suggest that increasing interest would increase achievement. To fi nd out whether one thing will have an effect on something else, researchers need to conduct some form of intervention study.

INTERVENTION STUDIES

In intervention studies , a particular method or treatment is expected to infl uence one or more outcomes. Such studies enable researchers to assess, for example, the effectiveness of various teaching methods, curriculum models, classroom arrangements, and other efforts to influence the characteristics of individuals or groups. Intervention studies can also contribute to general knowledge by confi rming (or failing to confirm) theoretical predictions (for instance, that abstract concepts can be taught to young children). The primary methodology used in intervention research is the experiment. Some types of educational research may combine these three general approaches. Although historical,ethnographic, and other qualitative research methodologies are primarily descriptive in nature, at times they may be associational if the investigator examines relationships. A descriptive historical study of college entrance requirements over time that examines the relationship between those requirements and achievement in mathematics is also associational. An ethnographic study that describes in detail the daily activities of an inner-city high school and also finds a relationship between media attention and teacher morale in the school is both descriptive and associational. An investigation of the effects of different teaching methods on concept learning that also reports the relationship between concept learning and gender is an example of a study that is both an intervention and an associational-type study.

META-ANALYSIS

Meta-analysis is an attempt to reduce the limitations of individual studies by trying to locate all of the studies on a particular topic and then using statistical means to synthesize the results of these studies. Some research apply to all types, while others are more likely to apply to particular types.

Critical Analysis of Research

There are some who feel that researchers who engage in the kinds of research we have just described take a bit too much for granted—indeed, that they make a number of unwarranted (and usually unstated) assumptions about the nature of the world in which we live. These critics (usually referred to as critical researchers) raise a number of philosophical, linguistic, ethical, and political questions not only about educational research as it is usually conducted but also about all fi elds of inquiry, ranging from the physical sciences to literature.

In an introductory text, we cannot hope to do justice to the many arguments and concerns these critics have raised over the years. What we can do is provide an introduction to some of the major questions they have repeatedly asked.

The first issue is the question of reality: As any beginning student of philosophy is well aware, there is no way to demonstrate whether anything “really exists.” There is, for example, no way to prove conclusively to others that I am looking at what I call a pencil (e.g., others may not be able to see it; they may not be able to tell where I am looking; I may be dreaming). Further, it is easily demonstrated that different individuals may describe the same individual, action, or event quite differently—leading some critics to the conclusion that there is no such thing as reality, only individual (and different) perceptions of it. One implication of this view is that any search for knowledge about the “real” world is doomed to failure.

We would acknowledge that what the critics say is correct: We cannot, once and for all, “prove” anything, and there is no denying that perceptions differ. We would argue, however, that our commonsense notion of reality (that what most knowledgeable persons agree exists is what is real) has enabled humankind to solve many problems—even the question of how to put a man on the moon.

The second issue is the question of communication. Let us assume that we can agree that some things are “real.” Even so, the critics argue that it is virtually impossible to show that we use the same terms to identify these things. For example, it is well known that the Inuit have many different words (and meanings) for the English word snow . To put it differently, no matter how carefully we define even a simple term such as shoe, the possibility always remains that one person’s shoe is not another’s. (Is a slipper a shoe? Is a shower clog a shoe?) If so much of language is imprecise, how then can relationships or laws—which try to indicate how various terms, things, or ideas are connected—be precise? Again, we would agree. People often do not agree on the meaning of a word or phrase. We would argue, however (as we think would most researchers), that we can defi ne terms clearly enough to enable different people to agree suffi ciently about what words mean that they can communicate and thus get on with the acquisition of useful knowledge.

The third issue is the question of values. Historically, scientists have often claimed to be value free, that is, “objective,” in their conduct of research. Critics have argued, however, that what is studied in the social sciences, including the topics and questions with which educational researchers are concerned, is never objective but rather socially constructed.

Such things as teacher-student interaction in classrooms, the performance of students on examinations, the questions teachers ask, and a host of other issues and topics of concern to educators do not exist in a vacuum. They are infl uenced by the society and times in which people live. As a result, such topics and concerns, as well as how they are defined, inevitably reflect the values of that society. Further, even in the physical sciences, the choice of problems to study and the means of doing so reflect the values of the researchers involved.

Here, too, we would agree. We think that most researchers in education would acknowledge the validity of the critics’ position. Many critical researchers charge, however, that such agreement is not sufficiently reflected in research reports. They say that many researchers fail to admit or identify “where they are coming from,” especially in their discussions of the findings of their research.

The fourth issue is the question of unstated assumptions. An assumption is anything that is taken for granted rather than tested or checked. Although this issue is similar to the previous issue, it is not limited to values but applies to both general and specific assumptions that researchers make with regard to a particular study. Some assumptions are so generally accepted that they are taken for granted by practically all social researchers (e.g., the sun will come out; the earth will continue to rotate). Other assumptions are more questionable. An example given by Krathwohl (2009), clarifies this. He points out that if researchers change the assumptions under which they operate, this may lead to different consequences. If we assume, for example, that mentally limited students learn in the same way as other students but more slowly, then it follows that given sufficient time and motivation, they can achieve as well as other students. The consequences of this view are to give these individuals more time, to place them in classes where the competition is less intense, and to motivate them to achieve. If, on the other hand, we assume that they use different conceptual structures into which they fit what they learn, this assumption leads to a search for simplified conceptual structures they can learn that will result in learning that approximates that of other students. Frequently authors do not make such assumptions clear.

In many studies, researchers implicitly assume that the terms they use are clear, that their samples are appropriate, and that their measurements are accurate. Designing a good study can be seen as trying to reduce these kinds of assumptions to a minimum. Readers should always be given enough information so that they do not have to make such assumptions. Figure 2 illustrates how an assumption can often be incorrect.

The fifth issue is the question of societal consequences. Critical theorists argue that traditional research efforts (including those in education) predominantly serve political interests that are, at best, conservative or, at worst, oppressive. They point out that such research is almost always focused on improving existing practices rather than raising questions about the practices themselves. They argue that, intentional or not, the efforts of most educational researchers have served essentially to reinforce the status quo. A more extreme position alleges that educational institutions (including research), rather than enlightening the citizenry, have served instead to prepare them to be uncritical functionaries in an industrialized society.

We would agree with this general criticism but note that there have been a number of investigations of the status quo itself, followed by suggestions for improvement, that have been conducted and offered by researchers of a variety of political persuasions.

Let us examine each of these issues in relation to a hypothetical example. Suppose a researcher decides to study the effectiveness of a course in formal logic in improving the ability of high school students to analyze arguments and arrive at defensible conclusions from data. The researcher accordingly designs a study that is sound enough in terms of design to provide at least a partial answer as to the effectiveness of the course. Let us address the fi ve issues presented above in relation to this study.

1.The question of reality: The abilities in question (analyzing arguments and reaching accurate conclusions) clearly are abstractions. They have no physical reality per se. But does this mean that such abilities do not “exist” in any way whatsoever? Are they nothing more than artifi cial by-products of our conceptual language system? Clearly, this is not the case. Such abilities do indeed exist in a somewhat limited sense, as when we talk about the “ability” of a person to do well on tests. But is test performance indicative of how well a student can perform in real life? If it is not, is the performance of students on such tests important? A critic might allege that the ability to analyze, for example, is situation specific: Some people are good analyzers on tests; others, in public forums; others, of written materials; and so forth. If this is so, then the concept of a general ability to “analyze arguments” would be an illusion. We think a good argument can be made that this is not the case—based on commonsense experience and on some research fi ndings. We must admit, however, that the critic has a point (we don’t know for sure how general this ability is), and one that should not be overlooked.

2. The question of communication: Assuming that these abilities do exist, can we define them well enough so that meaningful communication can result? We think so, but it is true that even the clearest of definitions does not always guarantee meaningful communication.This is often revealed when we discover that the way we use a term differs from how someone else uses the same term, despite previous agreement on a definition. We may agree, for example, that a “defensible conclusion” is one that does not contradict the data and that follows logically from the data, yet still find ourselves disagreeing as to whether or not a particular conclusion is a defensible one. Debates among scientists often boil down to differences as to what constitutes a defensible conclusion from data.

3. The question of values: Researchers who decide to investigate outcomes such as the ones in this study make the assumption that the outcomes are either desirable (and thus to be enhanced) or undesirable (and thus to be diminished), and they usually point out why this is so. Seldom, however, are the values (of the researchers) that led to the study of a particular outcome discussed. Are these outcomes studied because they are considered of highest priority? because they are traditional? socially acceptable? easier to study? financially rewarding?

The researcher’s decision to study whether a course in logic will affect the ability of students to analyze arguments reflects his or her values. Both the outcomes and the method studied reflect Eurocentric ideas of value; the Aristotelian notion of the “rational man” (or woman) is not dominant in all cultures. Might some not claim, in fact, that we need people in our society who will question basic assumptions more than we need people who can argue well from these assumptions? While researchers probably cannot be expected to discuss such complex issues in every study, these critics render a service by urging all of us interested in research to think about how our values may affect our research endeavors.

4. The question of unstated assumptions: In carrying out such a study, the researcher is assuming not only that the outcome is desirable but that the findings of the study will have some infl uence on educational practice. Otherwise, the study is nothing more than an academic exercise. Educational methods research has been often criticized for leading to suggested practices that, for various reasons, are unlikely to be implemented. While we believe that such studies should still be done, researchers have an obligation to make such assumptions clear and to discuss their reasonableness.

5. The question of societal consequences: Finally, let us consider the societal implications of a study such as this. Critics might allege that this study, while perhaps defensible as a scientifi c endeavor, will have a negative overall impact. How so? First by fostering the idea that the outcome being studied (the ability to analyze arguments) is more important than other outcomes (e.g., the ability to see novel or unusual relationships).This allegation has, in fact, been made for many years in education—that researchers have overemphasized the study of some outcomes at the expense of others.

A second allegation might be that such research serves to perpetuate discrimination against the less privileged segments of society. If it is true, as some contend, that some cultures are more “linear” and others more “global,” then a course in formal logic (being primarily linear) may increase the advantage already held by students from the dominant linear culture (Ramirez and Casteneda 1974). It can be argued that a fairer approach would teach a variety of argumentative methods, thereby capitalizing on the strengths of all cultural groups.

To summarize, we have attempted to present the major issues raised by an increasingly vocal part of the research community. These issues involve the nature of reality, the diffi culty of communication, the recognition that values always affect research, unstated assumptions, and the consequences of research for society as a whole. While we do not agree with some of the specific criticisms raised by these writers, we believe the research enterprise is the better for their efforts.

D. R. Krathwohl (2009). Methods of educational and social science research, 3rd ed. Long Grove, IL: Waveland Press, p. 91.

M. Ramirez and A. Casteneda (1974). Cultural democracy, biocognitive development and education. New York: Academic Press.

DESCRIPTIVE STUDIES

Descriptive studies describe a given state of affairs as fully and carefully as possible. One of the best examples of descriptive research is found in botany and zoology, where each variety of plant and animal species is meticulously described and information is organized into useful taxonomic categories. In educational research, the most common descriptive methodology is the survey, as when researchers summarize the characteristics (abilities, preferences, behaviors, and so on) of individuals or groups or (sometimes) physical environments (such as schools). Qualitative approaches, such as ethnographic and historical methodologies are also primarily descriptive in nature. Examples of descriptive studies in education include identifying the achievements of various groups of students; describing the behaviors of teachers, administrators, or counselors; describing the attitudes of parents; and describing the physical capabilities of schools. The description of phenomena is the starting point for all research endeavors.

Descriptive research in and of itself, however, is not very satisfying, since most researchers want to have a more complete understanding of people and things. This requires a more detailed analysis of the various aspects of phenomena and their interrelationships. Advances in biology, for example, have come about, in large part, as a result of the categorization of descriptions and the subsequent determination of relationships among these categories.

ASSOCIATIONAL RESEARCH

Educational researchers also want to do more than simply describe situations or events. They want to know how (or if), for example, differences in achievement are related to such things as teacher behavior, student diet, student interests, or parental attitudes. By investigating such possible relationships, researchers are able to understand phenomena more completely. Furthermore, the identification of relationships enables one to make predictions. If researchers know that student interest is related to achievement, for example, they can predict that students who are more interested in a subject will demonstrate higher achievement in that subject than students who are less interested. Research that investigates relationships is often referred to as associational research.

Correlational and causalcomparative methodologies are the principal examples of associational research. Other examples include studying relationships between achievement and attitude, between childhood experiences and adult characteristics, or between teacher characteristics and student achievement—all of which are correlational studies—and between methods of instruction and achievement (comparing students who have been taught by each method) or between gender and attitude (comparing attitudes of males and females)—both of which are causal-comparative studies.

As useful as associational studies are, they too are ultimately unsatisfying because they do not permit researchers to “do something” to infl uence or change outcomes. Simply determining that student interest is predictive of achievement does not tell us how to change or improve either interest or achievement, although it does suggest that increasing interest would increase achievement. To fi nd out whether one thing will have an effect on something else, researchers need to conduct some form of intervention study.

INTERVENTION STUDIES

In intervention studies , a particular method or treatment is expected to infl uence one or more outcomes. Such studies enable researchers to assess, for example, the effectiveness of various teaching methods, curriculum models, classroom arrangements, and other efforts to influence the characteristics of individuals or groups. Intervention studies can also contribute to general knowledge by confi rming (or failing to confirm) theoretical predictions (for instance, that abstract concepts can be taught to young children). The primary methodology used in intervention research is the experiment. Some types of educational research may combine these three general approaches. Although historical,ethnographic, and other qualitative research methodologies are primarily descriptive in nature, at times they may be associational if the investigator examines relationships. A descriptive historical study of college entrance requirements over time that examines the relationship between those requirements and achievement in mathematics is also associational. An ethnographic study that describes in detail the daily activities of an inner-city high school and also finds a relationship between media attention and teacher morale in the school is both descriptive and associational. An investigation of the effects of different teaching methods on concept learning that also reports the relationship between concept learning and gender is an example of a study that is both an intervention and an associational-type study.

META-ANALYSIS

Meta-analysis is an attempt to reduce the limitations of individual studies by trying to locate all of the studies on a particular topic and then using statistical means to synthesize the results of these studies. Some research apply to all types, while others are more likely to apply to particular types.

Critical Analysis of Research

There are some who feel that researchers who engage in the kinds of research we have just described take a bit too much for granted—indeed, that they make a number of unwarranted (and usually unstated) assumptions about the nature of the world in which we live. These critics (usually referred to as critical researchers) raise a number of philosophical, linguistic, ethical, and political questions not only about educational research as it is usually conducted but also about all fi elds of inquiry, ranging from the physical sciences to literature.

In an introductory text, we cannot hope to do justice to the many arguments and concerns these critics have raised over the years. What we can do is provide an introduction to some of the major questions they have repeatedly asked.

The first issue is the question of reality: As any beginning student of philosophy is well aware, there is no way to demonstrate whether anything “really exists.” There is, for example, no way to prove conclusively to others that I am looking at what I call a pencil (e.g., others may not be able to see it; they may not be able to tell where I am looking; I may be dreaming). Further, it is easily demonstrated that different individuals may describe the same individual, action, or event quite differently—leading some critics to the conclusion that there is no such thing as reality, only individual (and different) perceptions of it. One implication of this view is that any search for knowledge about the “real” world is doomed to failure.

We would acknowledge that what the critics say is correct: We cannot, once and for all, “prove” anything, and there is no denying that perceptions differ. We would argue, however, that our commonsense notion of reality (that what most knowledgeable persons agree exists is what is real) has enabled humankind to solve many problems—even the question of how to put a man on the moon.

The second issue is the question of communication. Let us assume that we can agree that some things are “real.” Even so, the critics argue that it is virtually impossible to show that we use the same terms to identify these things. For example, it is well known that the Inuit have many different words (and meanings) for the English word snow . To put it differently, no matter how carefully we define even a simple term such as shoe, the possibility always remains that one person’s shoe is not another’s. (Is a slipper a shoe? Is a shower clog a shoe?) If so much of language is imprecise, how then can relationships or laws—which try to indicate how various terms, things, or ideas are connected—be precise? Again, we would agree. People often do not agree on the meaning of a word or phrase. We would argue, however (as we think would most researchers), that we can defi ne terms clearly enough to enable different people to agree suffi ciently about what words mean that they can communicate and thus get on with the acquisition of useful knowledge.

The third issue is the question of values. Historically, scientists have often claimed to be value free, that is, “objective,” in their conduct of research. Critics have argued, however, that what is studied in the social sciences, including the topics and questions with which educational researchers are concerned, is never objective but rather socially constructed.

Such things as teacher-student interaction in classrooms, the performance of students on examinations, the questions teachers ask, and a host of other issues and topics of concern to educators do not exist in a vacuum. They are infl uenced by the society and times in which people live. As a result, such topics and concerns, as well as how they are defined, inevitably reflect the values of that society. Further, even in the physical sciences, the choice of problems to study and the means of doing so reflect the values of the researchers involved.

Here, too, we would agree. We think that most researchers in education would acknowledge the validity of the critics’ position. Many critical researchers charge, however, that such agreement is not sufficiently reflected in research reports. They say that many researchers fail to admit or identify “where they are coming from,” especially in their discussions of the findings of their research.

The fourth issue is the question of unstated assumptions. An assumption is anything that is taken for granted rather than tested or checked. Although this issue is similar to the previous issue, it is not limited to values but applies to both general and specific assumptions that researchers make with regard to a particular study. Some assumptions are so generally accepted that they are taken for granted by practically all social researchers (e.g., the sun will come out; the earth will continue to rotate). Other assumptions are more questionable. An example given by Krathwohl (2009), clarifies this. He points out that if researchers change the assumptions under which they operate, this may lead to different consequences. If we assume, for example, that mentally limited students learn in the same way as other students but more slowly, then it follows that given sufficient time and motivation, they can achieve as well as other students. The consequences of this view are to give these individuals more time, to place them in classes where the competition is less intense, and to motivate them to achieve. If, on the other hand, we assume that they use different conceptual structures into which they fit what they learn, this assumption leads to a search for simplified conceptual structures they can learn that will result in learning that approximates that of other students. Frequently authors do not make such assumptions clear.

In many studies, researchers implicitly assume that the terms they use are clear, that their samples are appropriate, and that their measurements are accurate. Designing a good study can be seen as trying to reduce these kinds of assumptions to a minimum. Readers should always be given enough information so that they do not have to make such assumptions. Figure 2 illustrates how an assumption can often be incorrect.

The fifth issue is the question of societal consequences. Critical theorists argue that traditional research efforts (including those in education) predominantly serve political interests that are, at best, conservative or, at worst, oppressive. They point out that such research is almost always focused on improving existing practices rather than raising questions about the practices themselves. They argue that, intentional or not, the efforts of most educational researchers have served essentially to reinforce the status quo. A more extreme position alleges that educational institutions (including research), rather than enlightening the citizenry, have served instead to prepare them to be uncritical functionaries in an industrialized society.

We would agree with this general criticism but note that there have been a number of investigations of the status quo itself, followed by suggestions for improvement, that have been conducted and offered by researchers of a variety of political persuasions.

Let us examine each of these issues in relation to a hypothetical example. Suppose a researcher decides to study the effectiveness of a course in formal logic in improving the ability of high school students to analyze arguments and arrive at defensible conclusions from data. The researcher accordingly designs a study that is sound enough in terms of design to provide at least a partial answer as to the effectiveness of the course. Let us address the fi ve issues presented above in relation to this study.

1.The question of reality: The abilities in question (analyzing arguments and reaching accurate conclusions) clearly are abstractions. They have no physical reality per se. But does this mean that such abilities do not “exist” in any way whatsoever? Are they nothing more than artifi cial by-products of our conceptual language system? Clearly, this is not the case. Such abilities do indeed exist in a somewhat limited sense, as when we talk about the “ability” of a person to do well on tests. But is test performance indicative of how well a student can perform in real life? If it is not, is the performance of students on such tests important? A critic might allege that the ability to analyze, for example, is situation specific: Some people are good analyzers on tests; others, in public forums; others, of written materials; and so forth. If this is so, then the concept of a general ability to “analyze arguments” would be an illusion. We think a good argument can be made that this is not the case—based on commonsense experience and on some research fi ndings. We must admit, however, that the critic has a point (we don’t know for sure how general this ability is), and one that should not be overlooked.

2. The question of communication: Assuming that these abilities do exist, can we define them well enough so that meaningful communication can result? We think so, but it is true that even the clearest of definitions does not always guarantee meaningful communication.This is often revealed when we discover that the way we use a term differs from how someone else uses the same term, despite previous agreement on a definition. We may agree, for example, that a “defensible conclusion” is one that does not contradict the data and that follows logically from the data, yet still find ourselves disagreeing as to whether or not a particular conclusion is a defensible one. Debates among scientists often boil down to differences as to what constitutes a defensible conclusion from data.

3. The question of values: Researchers who decide to investigate outcomes such as the ones in this study make the assumption that the outcomes are either desirable (and thus to be enhanced) or undesirable (and thus to be diminished), and they usually point out why this is so. Seldom, however, are the values (of the researchers) that led to the study of a particular outcome discussed. Are these outcomes studied because they are considered of highest priority? because they are traditional? socially acceptable? easier to study? financially rewarding?

The researcher’s decision to study whether a course in logic will affect the ability of students to analyze arguments reflects his or her values. Both the outcomes and the method studied reflect Eurocentric ideas of value; the Aristotelian notion of the “rational man” (or woman) is not dominant in all cultures. Might some not claim, in fact, that we need people in our society who will question basic assumptions more than we need people who can argue well from these assumptions? While researchers probably cannot be expected to discuss such complex issues in every study, these critics render a service by urging all of us interested in research to think about how our values may affect our research endeavors.

4. The question of unstated assumptions: In carrying out such a study, the researcher is assuming not only that the outcome is desirable but that the findings of the study will have some infl uence on educational practice. Otherwise, the study is nothing more than an academic exercise. Educational methods research has been often criticized for leading to suggested practices that, for various reasons, are unlikely to be implemented. While we believe that such studies should still be done, researchers have an obligation to make such assumptions clear and to discuss their reasonableness.

5. The question of societal consequences: Finally, let us consider the societal implications of a study such as this. Critics might allege that this study, while perhaps defensible as a scientifi c endeavor, will have a negative overall impact. How so? First by fostering the idea that the outcome being studied (the ability to analyze arguments) is more important than other outcomes (e.g., the ability to see novel or unusual relationships).This allegation has, in fact, been made for many years in education—that researchers have overemphasized the study of some outcomes at the expense of others.

A second allegation might be that such research serves to perpetuate discrimination against the less privileged segments of society. If it is true, as some contend, that some cultures are more “linear” and others more “global,” then a course in formal logic (being primarily linear) may increase the advantage already held by students from the dominant linear culture (Ramirez and Casteneda 1974). It can be argued that a fairer approach would teach a variety of argumentative methods, thereby capitalizing on the strengths of all cultural groups.

To summarize, we have attempted to present the major issues raised by an increasingly vocal part of the research community. These issues involve the nature of reality, the diffi culty of communication, the recognition that values always affect research, unstated assumptions, and the consequences of research for society as a whole. While we do not agree with some of the specific criticisms raised by these writers, we believe the research enterprise is the better for their efforts.

D. R. Krathwohl (2009). Methods of educational and social science research, 3rd ed. Long Grove, IL: Waveland Press, p. 91.

M. Ramirez and A. Casteneda (1974). Cultural democracy, biocognitive development and education. New York: Academic Press.

A Brief Overview of the Research Process

Regardless of methodology, all researchers engage in a number of similar activities. Almost all research plans

include, for example, a problem statement, a hypothesis, definitions, a literature review, a sample of subjects, tests or other measuring instruments, a description of procedures to be followed, including a time schedule, and a description of intended data analyses. We deal with each of these components in some detail throughout this book, but we want to give you a brief overview of them before we proceed.

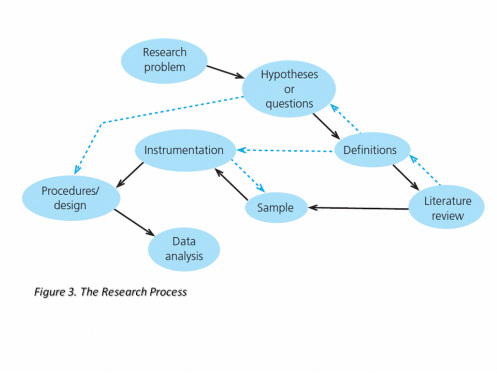

Figure 3 presents a schematic of the research components. The solid-line arrows indicate the sequence in which the components are usually presented and described in research proposals and reports. They also indicate a useful sequence for planning a study (that is, thinking about the research problem, followed by the hypothesis, followed by the defi nitions, and so forth). The broken-line arrows indicate the most likely departures from this sequence (for example, consideration of instrumentation sometimes results in changes in the sample; clarifying the question may suggest which type of design is most appropriate). The nonlinear pattern is intended to point out that, in practice, the process does not necessarily follow a precise sequence. In fact, experienced researchers often consider many of these components simultaneously as they develop their research plan.

Statement of the research problem: The problem of a study sets the stage for everything else. The problem statement should be accompanied by a description of the background of the problem (what factors caused it to be a problem in the first place) and a rationale or justifi cation for studying it. Any legal or ethical ramifications related to the problem should be discussed and resolved.

Formulation of an exploratory question or a hypothesis:

Research problems are usually stated as questions, and often as hypotheses. A hypothesis is a prediction, a statement of what specific results or outcomes are expected to occur. The hypotheses of a study should clearly indicate any relationships expected between the variables (the factors, characteristics, or conditions) being investigated and be so stated that they can be tested within a reasonable period of time. Not all studies are hypothesis- testing studies, but many are.

Definitions:

All key terms in the problem statement and hypothesis should be defi ed as clearly as possible.

Review of the related literature:

Other studies related to the research problem should be located and their results briefly summarized. The literature review (of appropriate journals, reports, monographs, etc.) should shed light on what is already known about the problem and should indicate logically why the proposed study would result in an extension of this prior knowledge.

Sample:

The subjects (the sample ) of the study and the larger group, or population (to whom results are to be generalized), should be clearly identified. The sampling plan (the procedures by which the subjects will be selected) should be described.

Instrumentation:

Each of the measuring instruments that will be used to collect data from the subjects should be described in detail, and a rationale should be given for its use.

Procedures:

The actual procedures of the study— what the researcher will do (what, when, where, how, and with whom) from beginning to end, in the order in which they will occur—should be spelled out in detail (although this is not written in stone). This, of course, is much less feasible and appropriate in a qualitative study. A realistic time schedule outlining when various tasks are to be started, along with expected completion dates, should also be provided. All materials (e.g., textbooks) and/or equipment (e.g., computers) that will be used in the study should also be described.

The general design or methodology (e.g., an experiment or a survey) to be used should be stated. In addition, possible sources of bias should beidentifi ed, and how they will be controlled should be explained.

Data analysis:

Any statistical techniques, both descriptive and inferential, to be used in the data analysis should be described.The comparisons to be made to answer the research question should be made clear.

Figure 3 presents a schematic of the research components. The solid-line arrows indicate the sequence in which the components are usually presented and described in research proposals and reports. They also indicate a useful sequence for planning a study (that is, thinking about the research problem, followed by the hypothesis, followed by the defi nitions, and so forth). The broken-line arrows indicate the most likely departures from this sequence (for example, consideration of instrumentation sometimes results in changes in the sample; clarifying the question may suggest which type of design is most appropriate). The nonlinear pattern is intended to point out that, in practice, the process does not necessarily follow a precise sequence. In fact, experienced researchers often consider many of these components simultaneously as they develop their research plan.

Statement of the research problem: The problem of a study sets the stage for everything else. The problem statement should be accompanied by a description of the background of the problem (what factors caused it to be a problem in the first place) and a rationale or justifi cation for studying it. Any legal or ethical ramifications related to the problem should be discussed and resolved.

Formulation of an exploratory question or a hypothesis:

Research problems are usually stated as questions, and often as hypotheses. A hypothesis is a prediction, a statement of what specific results or outcomes are expected to occur. The hypotheses of a study should clearly indicate any relationships expected between the variables (the factors, characteristics, or conditions) being investigated and be so stated that they can be tested within a reasonable period of time. Not all studies are hypothesis- testing studies, but many are.

Definitions:

All key terms in the problem statement and hypothesis should be defi ed as clearly as possible.

Review of the related literature:

Other studies related to the research problem should be located and their results briefly summarized. The literature review (of appropriate journals, reports, monographs, etc.) should shed light on what is already known about the problem and should indicate logically why the proposed study would result in an extension of this prior knowledge.

Sample:

The subjects (the sample ) of the study and the larger group, or population (to whom results are to be generalized), should be clearly identified. The sampling plan (the procedures by which the subjects will be selected) should be described.

Instrumentation:

Each of the measuring instruments that will be used to collect data from the subjects should be described in detail, and a rationale should be given for its use.

Procedures:

The actual procedures of the study— what the researcher will do (what, when, where, how, and with whom) from beginning to end, in the order in which they will occur—should be spelled out in detail (although this is not written in stone). This, of course, is much less feasible and appropriate in a qualitative study. A realistic time schedule outlining when various tasks are to be started, along with expected completion dates, should also be provided. All materials (e.g., textbooks) and/or equipment (e.g., computers) that will be used in the study should also be described.

The general design or methodology (e.g., an experiment or a survey) to be used should be stated. In addition, possible sources of bias should beidentifi ed, and how they will be controlled should be explained.

Data analysis:

Any statistical techniques, both descriptive and inferential, to be used in the data analysis should be described.The comparisons to be made to answer the research question should be made clear.